Planning to deploy a custom foundation model

Review the considerations and requirements for deploying a custom foundation model for inferencing with watsonx.ai.

As you prepare to deploy a custom foundation model, review these planning considerations:

- Review the Requirements and usage notes for custom foundation models

- Review the Supported architectures for custom foundation models to make sure that your model is compatible.

- Collect the details that are required as prerequisites for deploying a custom foundation model.

- Select a hardware specification for your custom foundation model.

- Review the deployment limitations

- Enable task credentials to be able to deploy custom foundation models.

Requirements and usage notes for custom foundation models

Deployable custom models must meet these requirements:

-

Uploading and using your own custom model is available only in the Standard plan for watsonx.ai.

-

The model must be built with a supported model architecture type.

-

The file list for the model must contain a

config.jsonfile. -

General-purpose models: the model must be in a

safetensorsformat with the supported transformers library and must include atokenizer.jsonfile. If the model is not insafetensorsformat and does not include thetokenizer.jsonfile but is otherwise compatible, a conversion utility will make necessary changes as part of the model preparation process. -

Time-series models: the model directory must contain the

tsfm_config.jsonfile. Time-series models that are hosted on Hugging Face (model_type:tinytimemixer) may not include this file. If the file is not there when the model is downloaded and deployed, forecasting will fail. To avoid forecasting issues, you'll have to perform an extra step when you download the model.Important:- General-purpose models: you must make sure that your custom foundation model is saved with the supported

transformerslibrary. If the model.safetensors file for your custom foundation model uses an unsupported data format in the metadata header, your deployment might fail. For more information, see Troubleshooting watsonx.ai Runtime. - Make sure that the project or space where you want to deploy your custom foundation model has an associated watsonx.ai Runtime instance. Open the Manage tab in your project or space to check that.

- General-purpose models: you must make sure that your custom foundation model is saved with the supported

Supported model architectures

The following tables list the model architectures that you can deploy as custom models for inferencing with watsonx.ai. The model architectures are listed together with information about their supported quantization methods, parallel tensors, deployment configuration sizes, and software specifications.

Various software specifications are available for your deployments:

- The

watsonx-cfm-caikit-1.0software specification is based on TGI runtime engine. - The

watsonx-cfm-caikit-1.1software specification is based on the vLLM runtime engine. It is better in terms of performance, but it's not available with every model architecture. - The

watsonx-tsfm-runtime-1.0software specification is designed for time-series models. It's based on thewatsonx-tsfm-runtime-1.0inference runtime.

General-purpose models:

| Model architecture type | Foundation model examples | Quantization method | Parallel tensors (multiGpu) | Deployment configurations | Software specifications |

|---|---|---|---|---|---|

bloom |

bigscience/bloom-3b, bigscience/bloom-560m |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

codegen |

Salesforce/codegen-350M-mono, Salesforce/codegen-16B-mono |

N/A | No | Small | watsonx-cfm-caikit-1.0 |

exaone |

lgai-exaone/exaone-3.0-7.8B-Instruct |

N/A | No | Small | watsonx-cfm-caikit-1.1 |

falcon |

tiiuae/falcon-7b |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

gemma |

google/gemma-2b |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

gemma2 |

google/gemma-2-9b |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

gpt_bigcode |

bigcode/starcoder, bigcode/gpt_bigcode-santacoder |

gptq |

Yes | Small, Medium, Large | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

gpt-neox |

rinna/japanese-gpt-neox-small, EleutherAI/pythia-12b, databricks/dolly-v2-12b |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

gptj |

EleutherAI/gpt-j-6b |

N/A | No | Small | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

gpt2 |

openai-community/gpt2-large |

N/A | No | Small | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

granite |

ibm-granite/granite-3.0-8b-instruct, ibm-granite/granite-3b-code-instruct-2k, granite-8b-code-instruct, granite-7b-lab |

N/A | No | Small | watsonx-cfm-caikit-1.1 |

jais |

core42/jais-13b |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

llama |

DeepSeek-R1 (distilled variant), meta-llama/Meta-Llama-3-8B, meta-llama/Meta-Llama-3.1-8B-Instruct, llama-2-13b-chat-hf, TheBloke/Llama-2-7B-Chat-AWQ, ISTA-DASLab/Llama-2-7b-AQLM-2Bit-1x16-hf |

gptq |

Yes | Small, Medium, Large | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

mistral |

mistralai/Mistral-7B-v0.3, neuralmagic/OpenHermes-2.5-Mistral-7B-marlin |

N/A | No | Small | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

mixtral |

TheBloke/Mixtral-8x7B-v0.1-GPTQ, mistralai/Mixtral-8x7B-Instruct-v0.1 |

gptq |

No | Small | watsonx-cfm-caikit-1.1 |

mpt |

mosaicml/mpt-7b, mosaicml/mpt-7b-storywriter, mosaicml/mpt-30b |

N/A | No | Small | watsonx-cfm-caikit-1.0, watsonx-cfm-caikit-1.1 |

mt5 |

google/mt5-small, google/mt5-xl |

N/A | No | Small | watsonx-cfm-caikit-1.0 |

nemotron |

nvidia/Minitron-8B-Base |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

olmo |

allenai/OLMo-1B-hf, allenai/OLMo-7B-hf |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

persimmon |

adept/persimmon-8b-base, adept/persimmon-8b-chat |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

phi |

microsoft/phi-2, microsoft/phi-1_5 |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

phi3 |

microsoft/Phi-3-mini-4k-instruct |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

qwen |

DeepSeek-R1 (distilled variant) |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

qwen2 |

Qwen/Qwen2-7B-Instruct-AWQ |

AWQ |

Yes | Small, Medium, Large | watsonx-cfm-caikit-1.1 |

t5 |

google/flan-t5-large, google/flan-t5-small |

N/A | Yes | Small, Medium, Large | watsonx-cfm-caikit-1.0 |

Time-series models:

| Model architecture type | Foundation model examples | Quantization method | Parallel tensors (multiGpu) | Deployment configurations | Software specifications |

|---|---|---|---|---|---|

tinytimemixer |

ibm-granite/granite-timeseries-ttm-r2 |

N/A | N/A | Small, Medium, Large, Extra large | watsonx-tsfm-runtime-1.0 |

- IBM only certifies the model architectures that are listed in Table 1 and Table 2. You can use models with other architectures that are supported by the vLLM inference framework, but IBM does not support deployment failures as a result of deploying foundation models with unsupported architectures or incompatible features.

- Deployments of

llama 3.1models might fail. To address this issue, see steps that are listed in Troubleshooting. - It is not possible to deploy

codegen,mt5, andt5type models with thewatsonx-cfm-caikit-1.1software specification. - If your model does not support parallel tensors, the only configuration that you can use is

Small. If your model was trained with more parameters than theSmallconfiguration supports it will fail. This means that you won't be able to deploy some of your custom models. For more information on limitations, see Resource utilization guidelines.

Collecting the prerequisite details for a custom foundation model

-

Check for the existence of the file

config.jsonin the foundation model content folder. Deployment service will mandate for existence of the fileconfig.jsonin the foundation model content folder after it is uploaded to the cloud storage. -

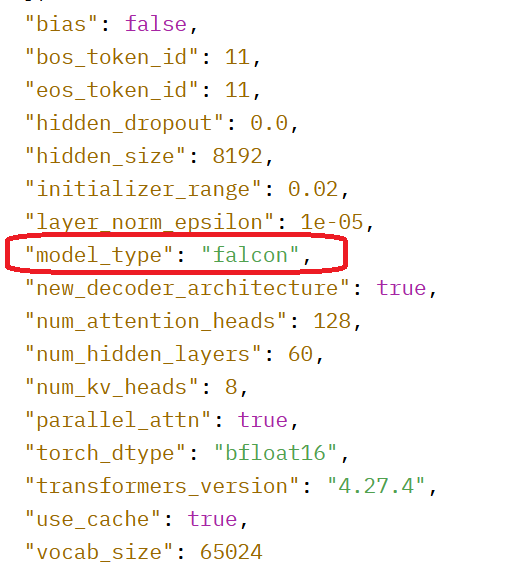

Open the

config.jsonfile to confirm that the foundation model uses a supported architecture. -

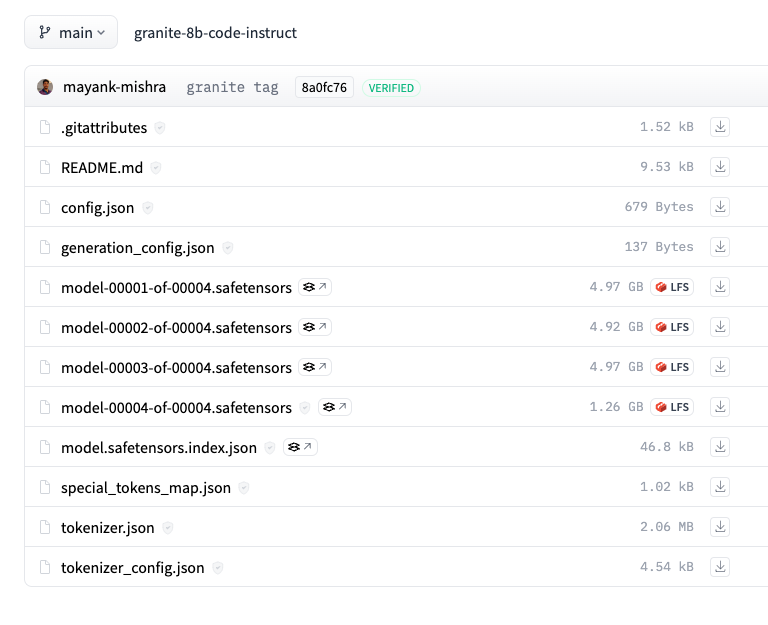

View the list of files for the foundation model to check for the

tokenizer.jsonfile and that the model content is in.safetensorsformat.Important:You must make sure that your custom foundation model is saved with the supported

transformerslibrary. If the model.safetensors file for your custom foundation model uses an unsupported data format in the metadata header, your deployment might fail. For more information, see Troubleshooting watsonx.ai Runtime.

See an example:

For the falcon-40b model stored on Hugging Face, click Files and versions to view the file structure and check for config.json:

The example model uses a version of the supported falcon architecture.

This example model contains the tokenizer.json file and is in the .safetensors format:

If the model does not meet these requirements, you cannot create a model asset and deploy your model.

Resource utilization guidelines

Time-series models

The inference runtime for time-series models supports these hardware specifications Small, Medium, Large, Extra large

Assign a hardware specification to your custom time-series model, based on the maximum number of concurrent users and payload characteristics:

| Univariate Time Series | Multivariate Time Series (Series x Targets) | Small | Medium | Large | Extra large |

|---|---|---|---|---|---|

| 1000 | 23x100 | 6 | 12 | 25 | 50 |

| 500 | 15x80 | 10 | 21 | 42 | 85 |

| 250 | 15x40 | 13 | 26 | 53 | 106 |

| 125 | 15x20 | 13 | 27 | 54 | 109 |

| 60 | 15x10 | 14 | 28 | 56 | 112 |

| 30 | 15x5 | 14 | 28 | 56 | 113 |

General-purpose models

For general-purpose models, three configurations are available to support your custom foundation model: Small, Medium, and Large. To determine the most suitable configuration for your custom foundation

model, see the following guidelines:

- Assign the

Smallconfiguration to any double-byte precision model under 26B parameters, subject to testing and validation. - Assign the

Mediumconfiguration to any double-byte precision model between 27B and 53B parameters, subject to testing and validation. - Assign the

Largeconfiguration to any double byte precision model between 54B and 106B parameters, subject to testing and validation.

If the selected configuration fails during the testing and validation phase, consider exploring the next higher configuration available. For example, try the Medium configuration if the Small configuration fails.

Currently the Large configuration is the highest available configuration.

| Configuration | Examples of suitable models |

|---|---|

| Small | llama-3-8bllama-2-13bstarcoder-15.5bmt0-xxl-13bjais-13bgpt-neox-20bflan-t5-xxl-11bflan-ul2-20ballam-1-13b |

| Medium | codellama-34b |

| Large | llama-3-70b llama-2-70b |

Limitations and restrictions for custom foundation models

Note these limits on how you can deploy and use custom foundation models with watsonx.ai.

Limitations for deploying custom foundation models

- Due to high demand for custom foundation model deployments and limited resources to accommodate it, watsonx.ai has a deployment limit of either four small models, two medium models, or one large model per IBM Cloud account. If you attempt to import a custom foundation model beyond these limits, you will be notified and asked to share your feedback through a survey. This will help us understand your needs and plan for future capacity upgrades.

- Time-series models do not take any parameters. Do not provide any parameters when you are deploying a custom time-series model. If you provide parameters when you deploy a custom time-series model, they will have no effect.

Restrictions for using custom foundation model deployments

List of restrictions for using custom foundation models after they are deployed with watsonx.ai:

- You cannot tune a custom foundation model.

- You cannot use watsonx.governance to evaluate or track a prompt template for a custom foundation model.

Help us improve this experience

If you want to share your feedback now, click this link. Your feedback is essential in helping us plan for future capacity upgrades and improve the overall custom foundation model deployment experience. Thank you for your cooperation!

Next steps

Downloading a custom foundation model and setting up storage

Parent topic: Deploying a custom foundation model